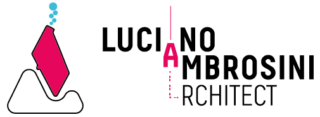

AI Outpainting with Stable Diffusion via Grasshopper

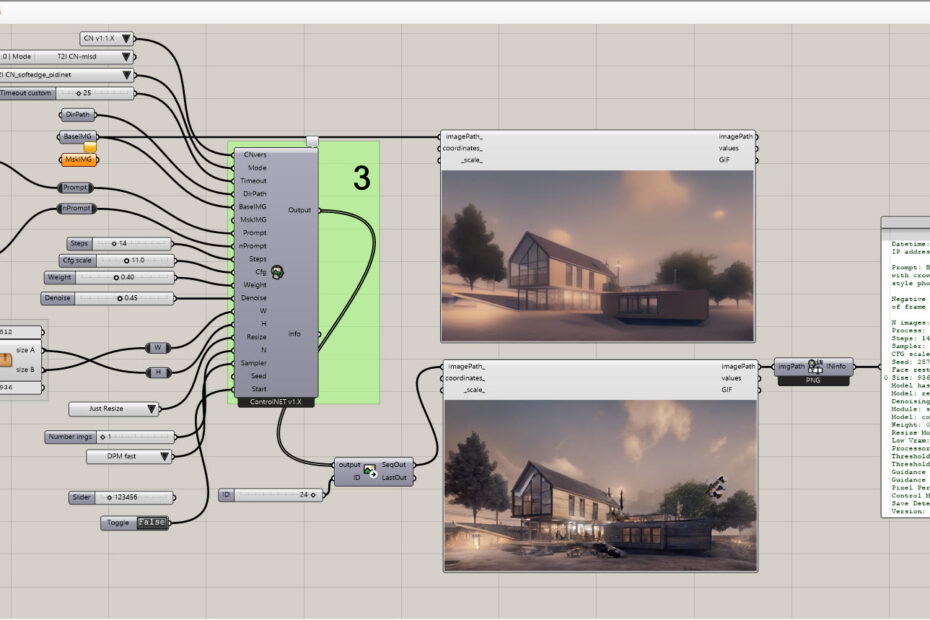

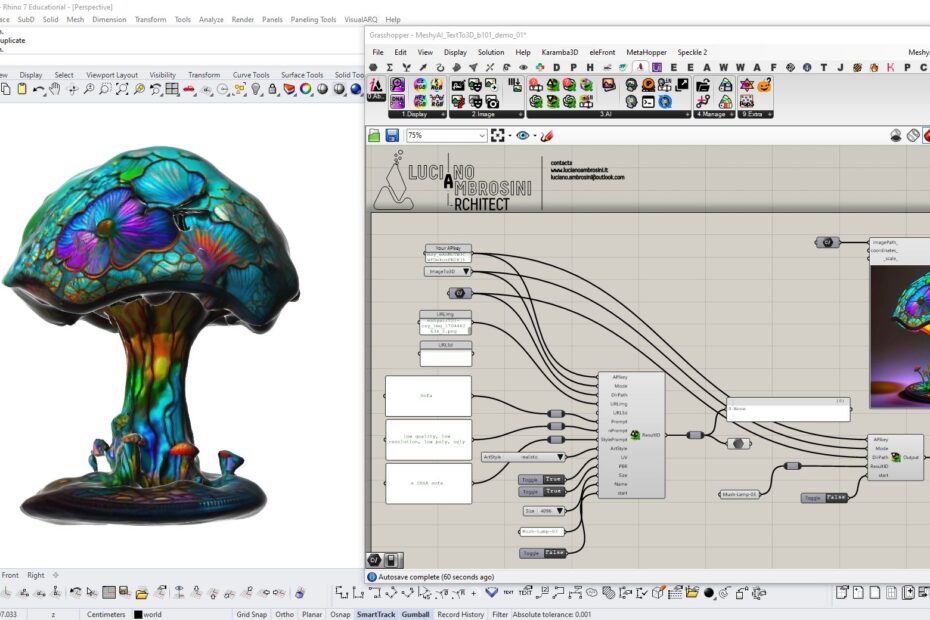

The Ambrosinus Toolkit v1.2.8 now includes a new feature: AI outpainting. This addition enhances the Grasshopper platform with another AI tool that utilizes Stable Diffusion technology. So, it will be possible to explore your design even outside the usual on-board frame!