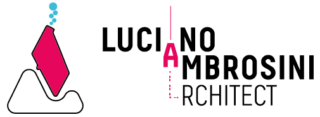

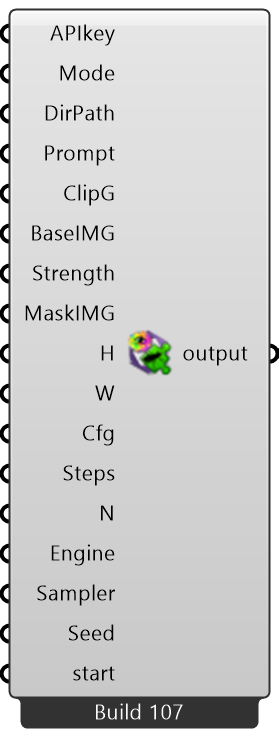

Although this, with Build-106 of the StabilityAI-GH component I have introduced and included the last features of “stability-sdk” Python library.

So, summarized you can run/apply the following features:

- Text-to-Image mode

- Image-to-Image mode

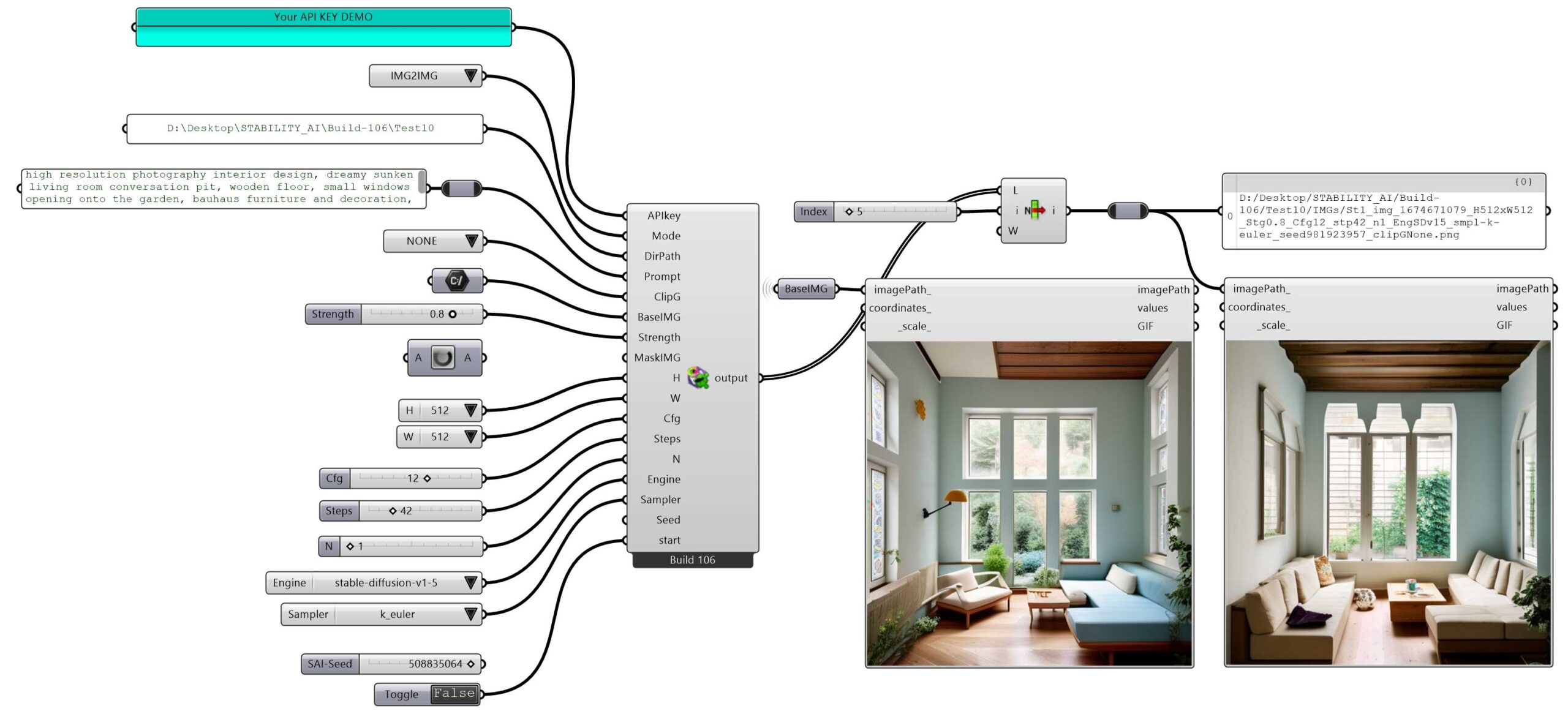

- Image-to-Image masking mode (with grayscale and blurred images as masks)

- Apply CLIP guidance presets (None, Simple, FastBlue, FastGreen, Slow, Slower and Slowest)

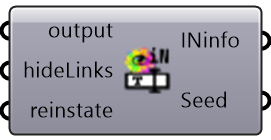

by using a couple of new/updated components like GrayGaussMask, FileNamer and SdINinfo

|

|

|

it is possible to:

- Generate a grayscale image and a blurred one thanks to some python libraries – You have full control over black/white areas

- Generate a filename without incurring the overwriting action and put also the mask images into “IMGs” folder

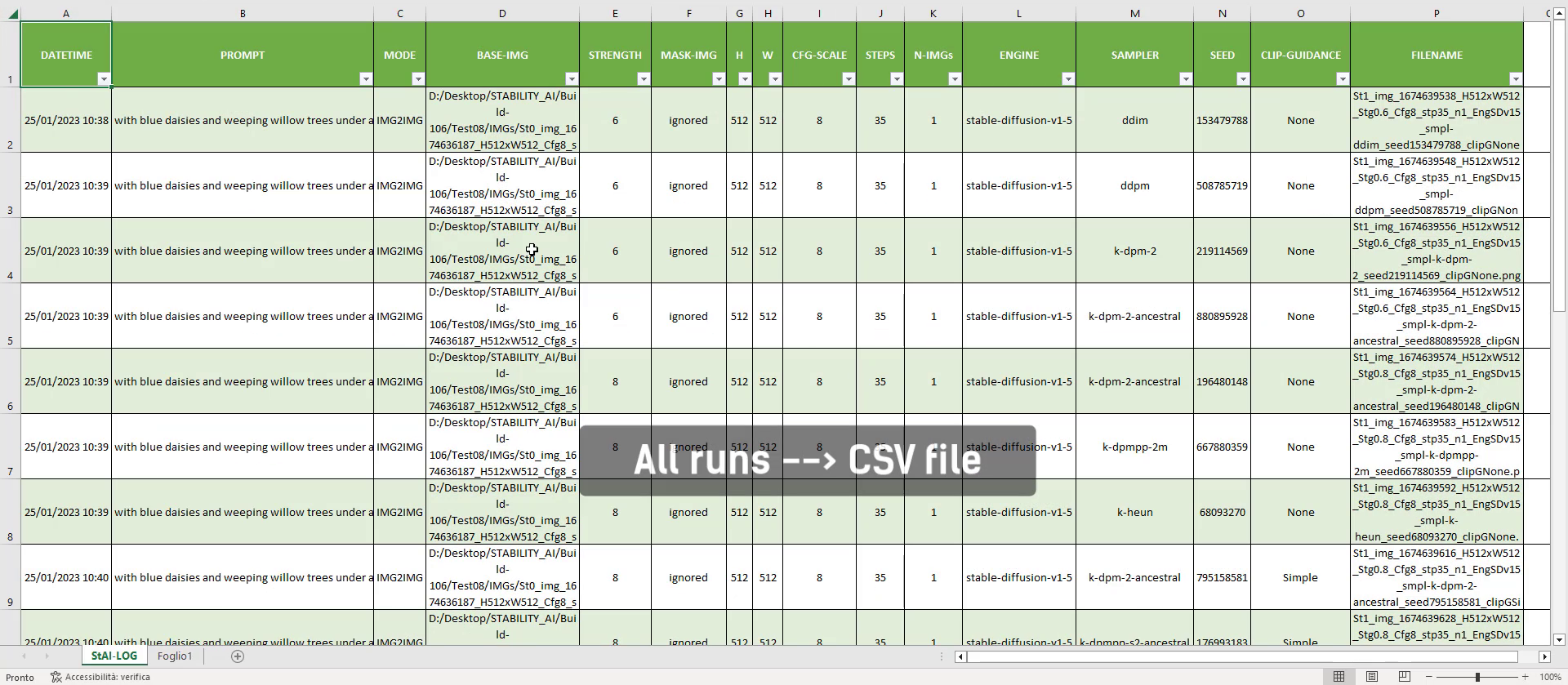

- Read all useful parameters used in generating your AI art image

- Track all runs in a unique CSV file

About GrayGaussMask component In order to run it you need to install the Python library as shown here:

pip install torchvision

This library is ~160MB so wait until appear the installation successful message.

Below are these components in action

I have always used to say:

A Computational Designer is also (but not exclusively) a data storyteller

While you are exploring your design and pushing forward your creativity, the StabilityAI-GH component (the same happens for OpenAI) stores all data in this way:

- Images are stored in the “YourCustomFolder\IMGs” folder

- Metadata (for each run) is stored in the “YourCustomFolder\TXTs” folder

- All your runs are stored in a unique CSV file

Here below the video DEMO