Recently many Architects and Designers have been exploring different AI platforms – This is exciting!

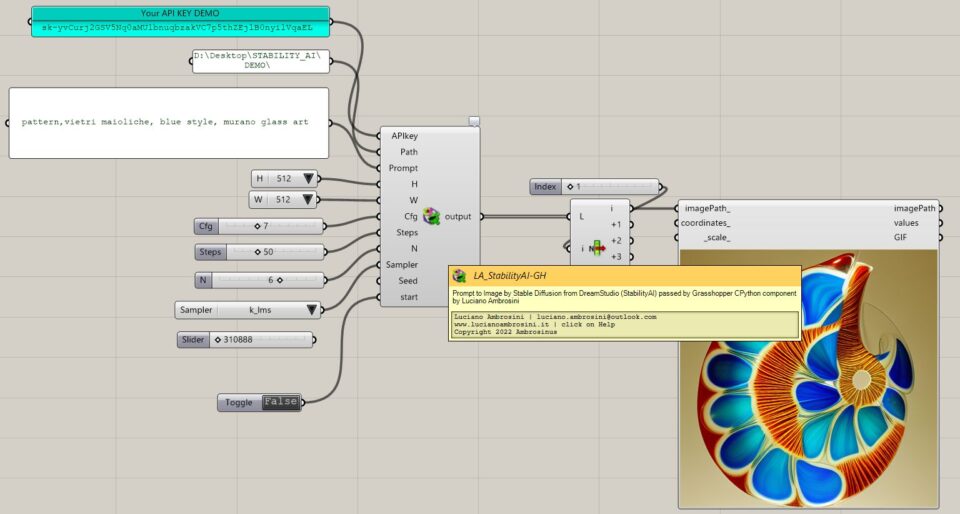

Thanks to Stability-AI API documentation for Stable Diffusion (v 1.5) by DreamStudio it is possible to integrate into Grasshopper our “prompts to image” process throughout my component “LA_StabilityAI-GH”

Now this component is available on my GitHub page and it will be turned on in the “AI” sub-category of the Ambrosinus-Toolkit plugin

Useful premises – installation prerequisite steps

1 – I recommend reading this page as could be useful to understand how Stability AI works;

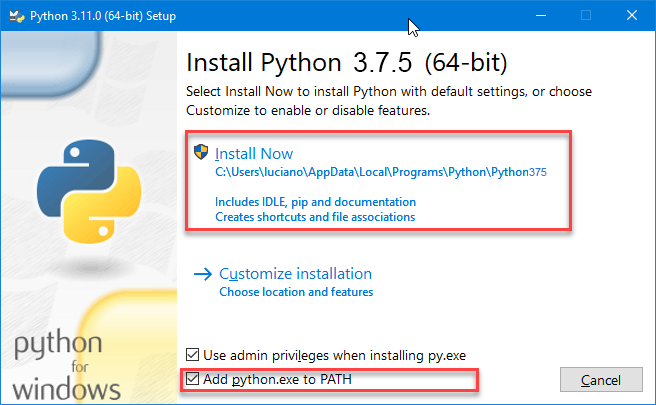

2 – Install the Python release 3.7.5 (this is the version I have tested) – It is really important to accept the default settings and tick this option “Add python.exe to PATH”;

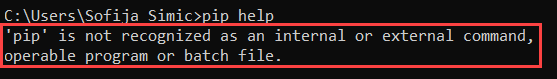

3 – Check your pip installation you should read a list of commands and general options – if it is your case jump to point 4:

Launch the command prompt window:

- Press Windows Key + X.

- Click Run.

- Type in cmd.exe and hit enter.

- In cmd.exe type:

pip help

Sometimes some machines do not have the pip protocol installed (full source here).

If you encounter this issue execute this step:

- Launch a command prompt if it isn’t already open. To do so, open the Windows search bar, type cmd and click on the icon.

- Then, run the following command to download the get-pip.py file:

curl https://bootstrap.pypa.io/get-pip.py -o get-pip.py

4 – Install the “stability-sdk” library using the pip protocol via the windows command prompt (cmd.exe)

4.1 – Restart your machine 😉

5 – From Grasshopper side: Super important! install the exceptional GH_CPYTHON component by MahmoudAbdelRahman (the Python with a black icon – check that the files are “unblocked”) – This is necessary for importing the stability-sdk library and some others to run my ghuser component,

5.1 – Restart your Rhino

A little issue that I encountered is the syntax of the folder path. Generally, it has this format “C:\Desktop\test_folder” and it works like a charm on several machines; in some cases (which happened on my laptop) you need to replace the backslash ‘\’ with the slash’/’: “C:/Desktop/test_folder”

I have used this procedure above on some machines different from my DEV workstation, just to be sure.

Then…

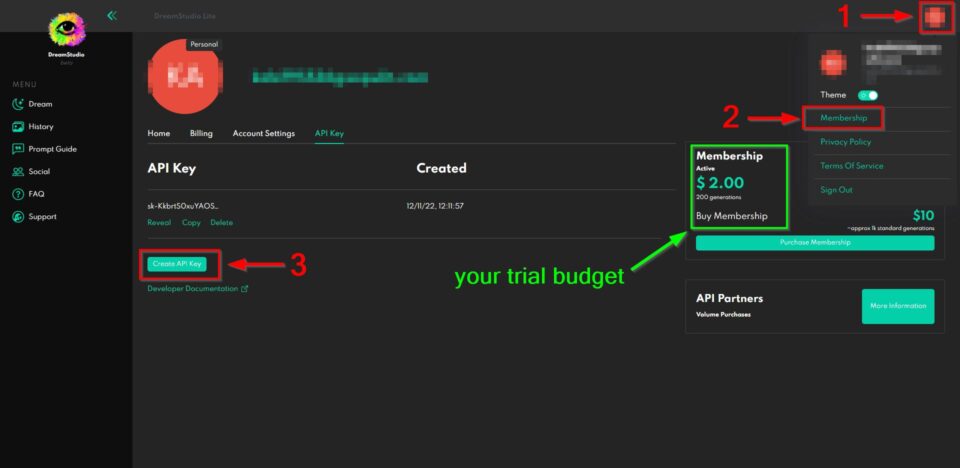

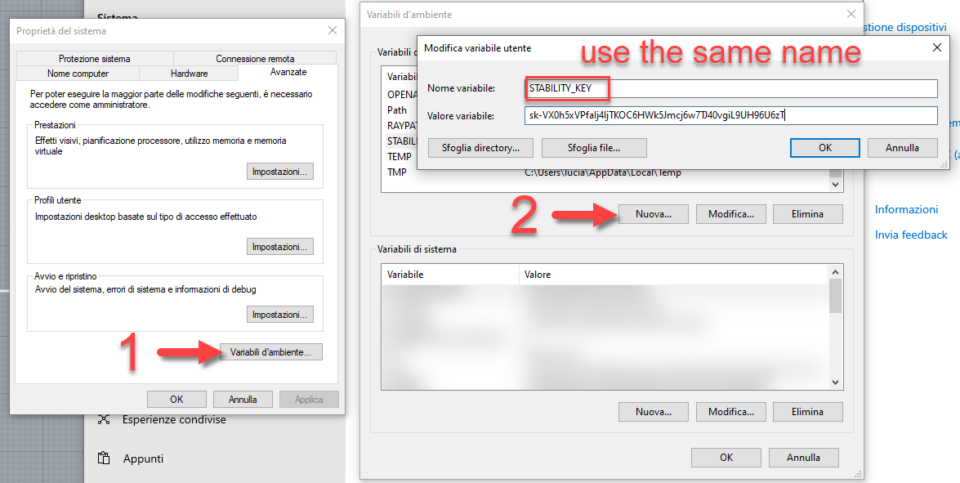

Generate a personal API key from your DreamStudio account and save it in your window environment variables with this name: “STABILITY_KEY”

Each creation will take a small amount of your trial-granted credit (around 200 images at standard res = $2.00). Don’t share your API key.

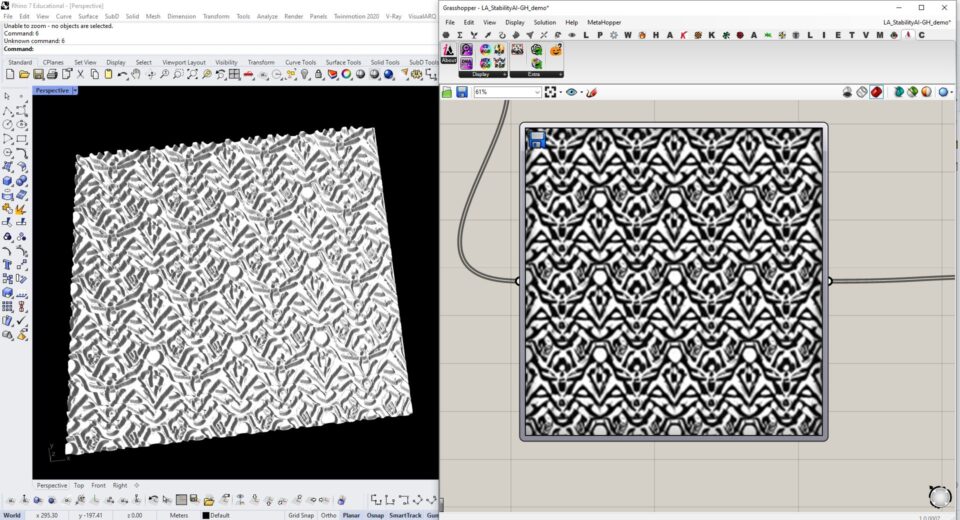

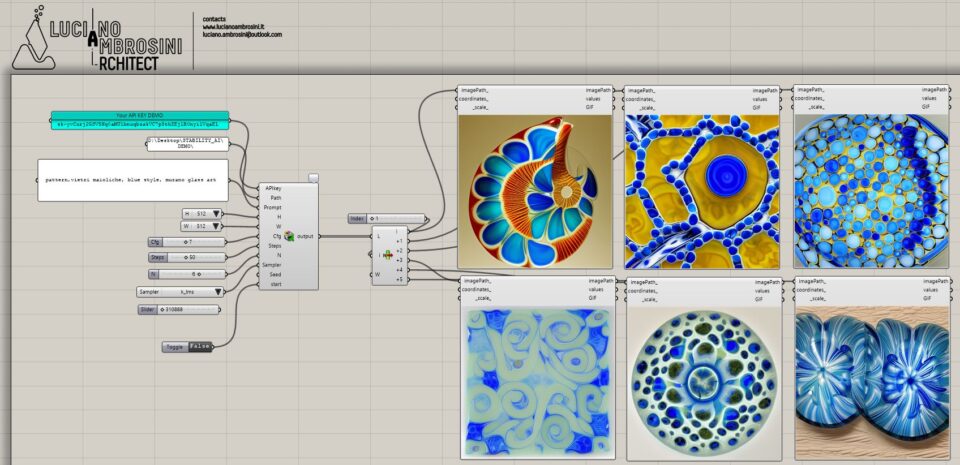

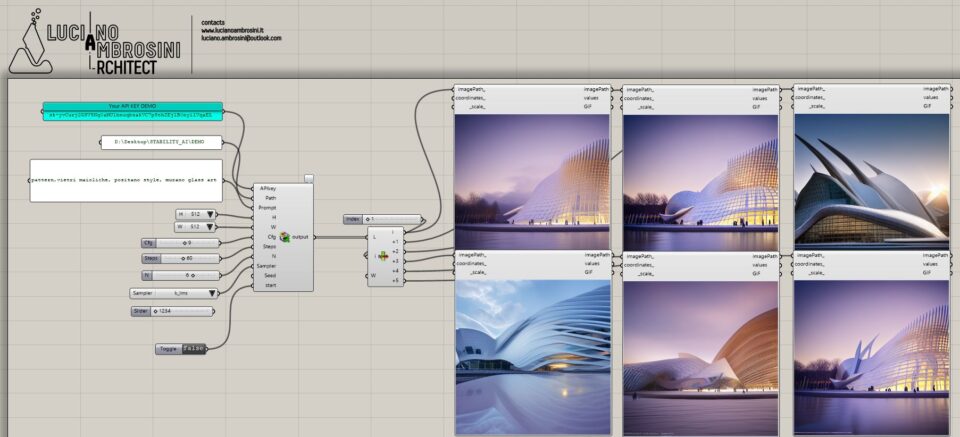

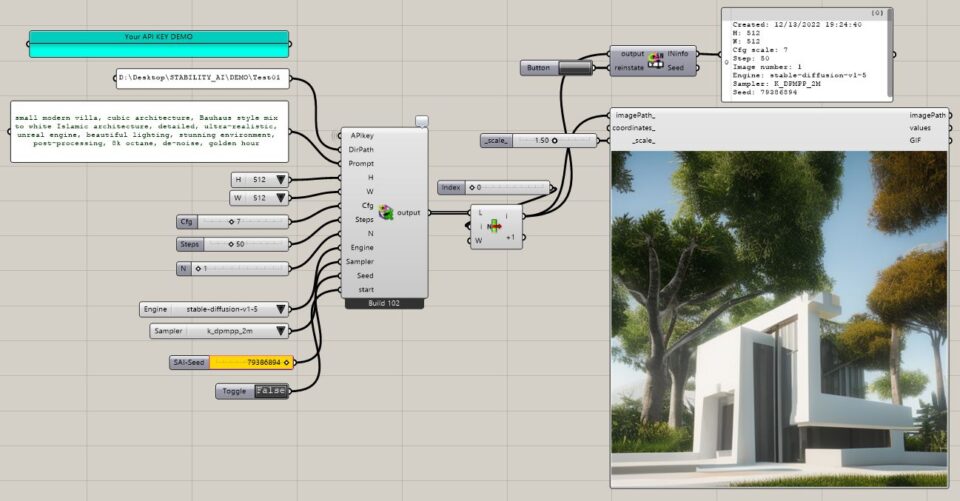

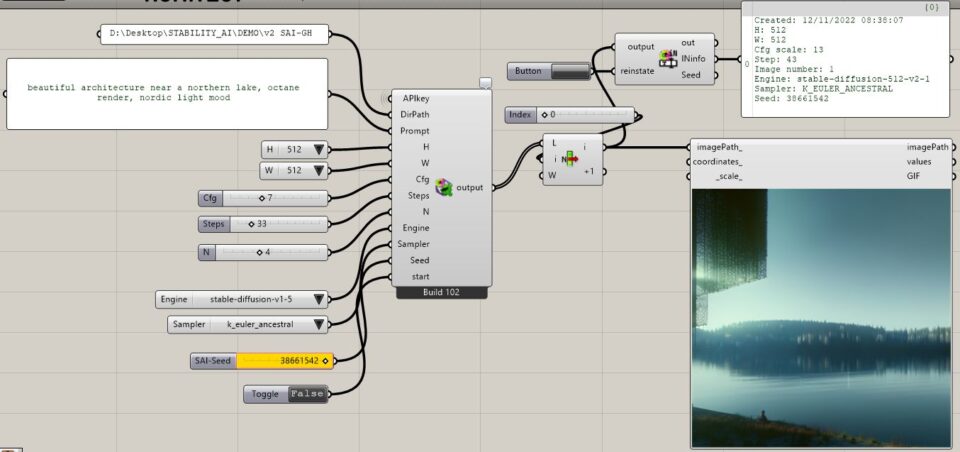

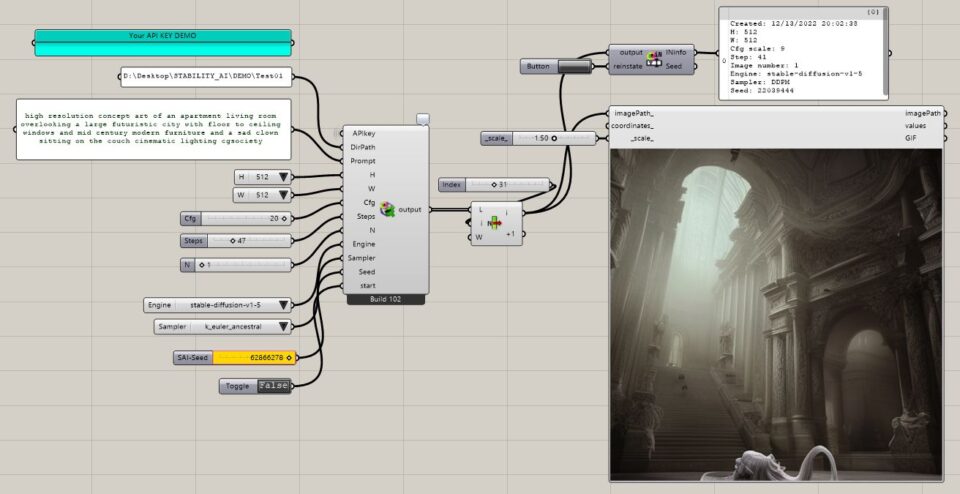

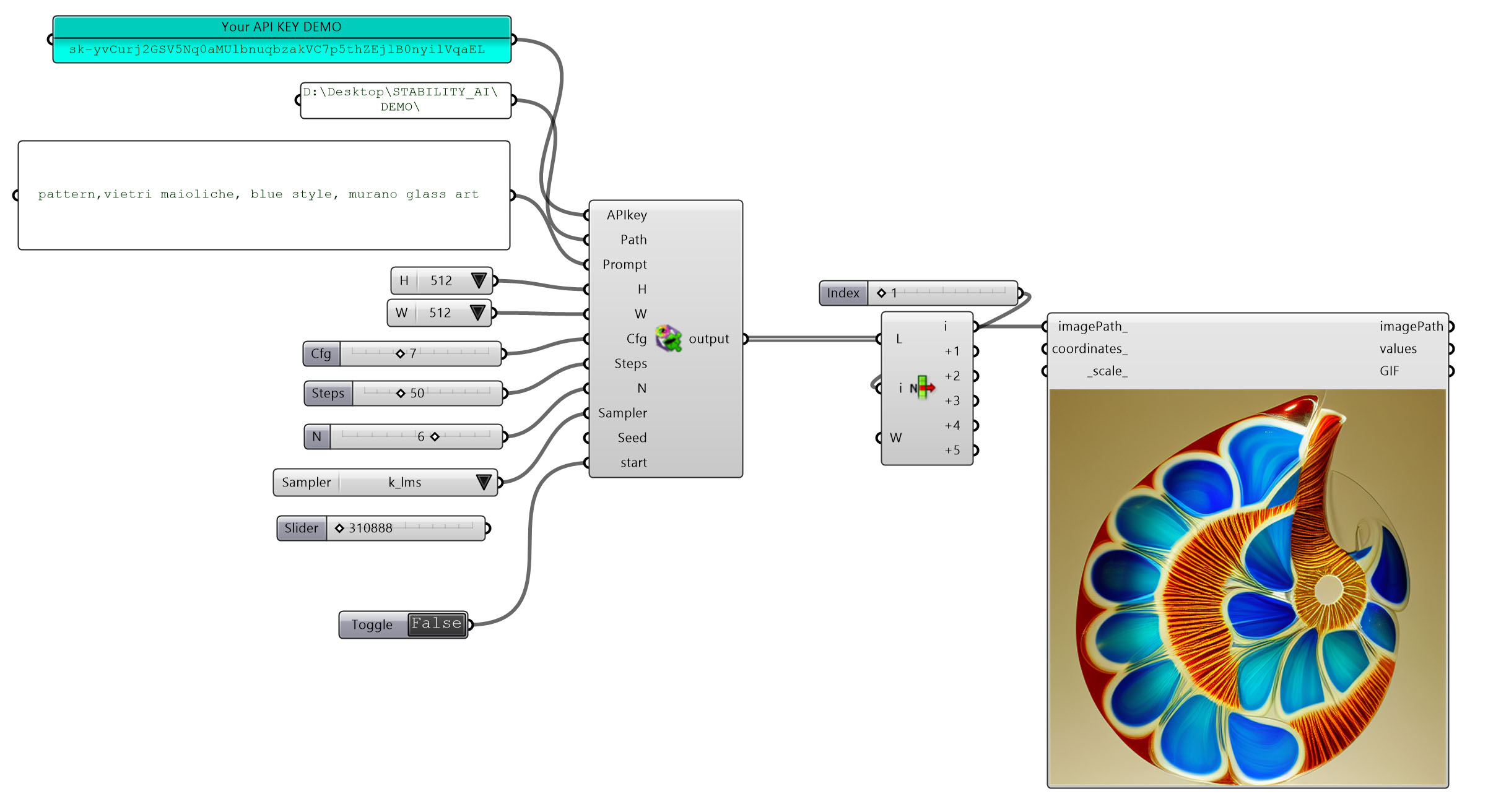

Below is the new component in action:

- You need an active internet connection (the image elaboration happens on the DreamStudio servers) – So that’s why you need always an API key;

- You can save your Stability AI API key generated on DreamStudio in the environment variables of Windows O.S. or give it as input as shown above (my demo key has been deleted this is just a demonstration 😉 ) – After you have saved your API key into the environment variables of Windows, restart your Rhino for letting the component reads the key. If you don’t connect anything in the “APIkey” input, the component will read the key stored in your Windows O.S.;

- When the toggle is in the “False state” the component read only the PNG files inside “Path” directory;

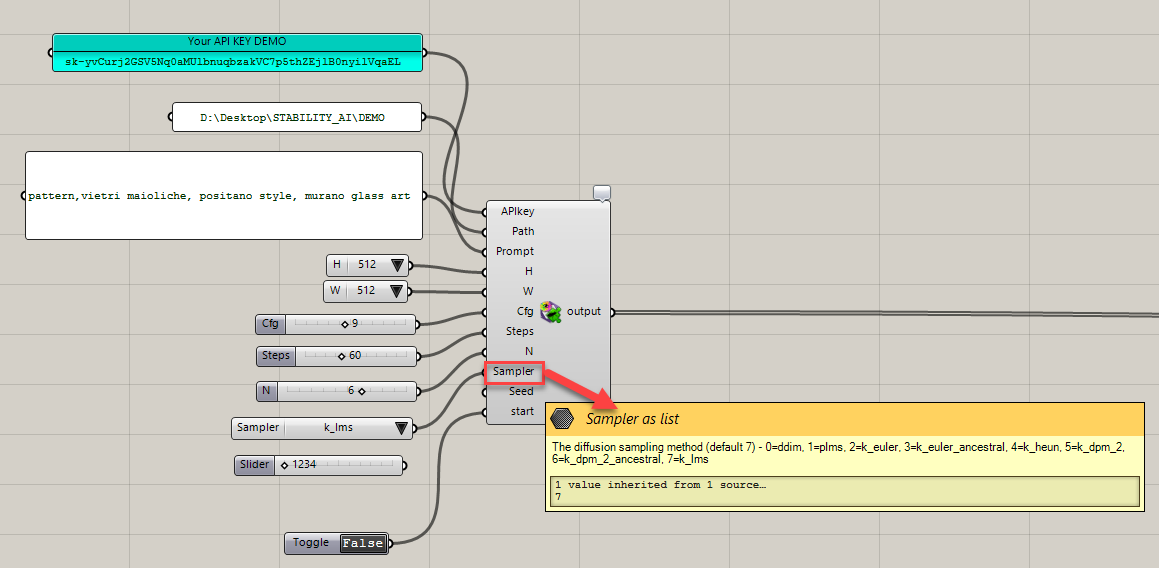

- Hoover the mouse over the input parameters to read their description. Some parameters have different values range and above all, you can see the default value suggested by Stability AI for each of them.

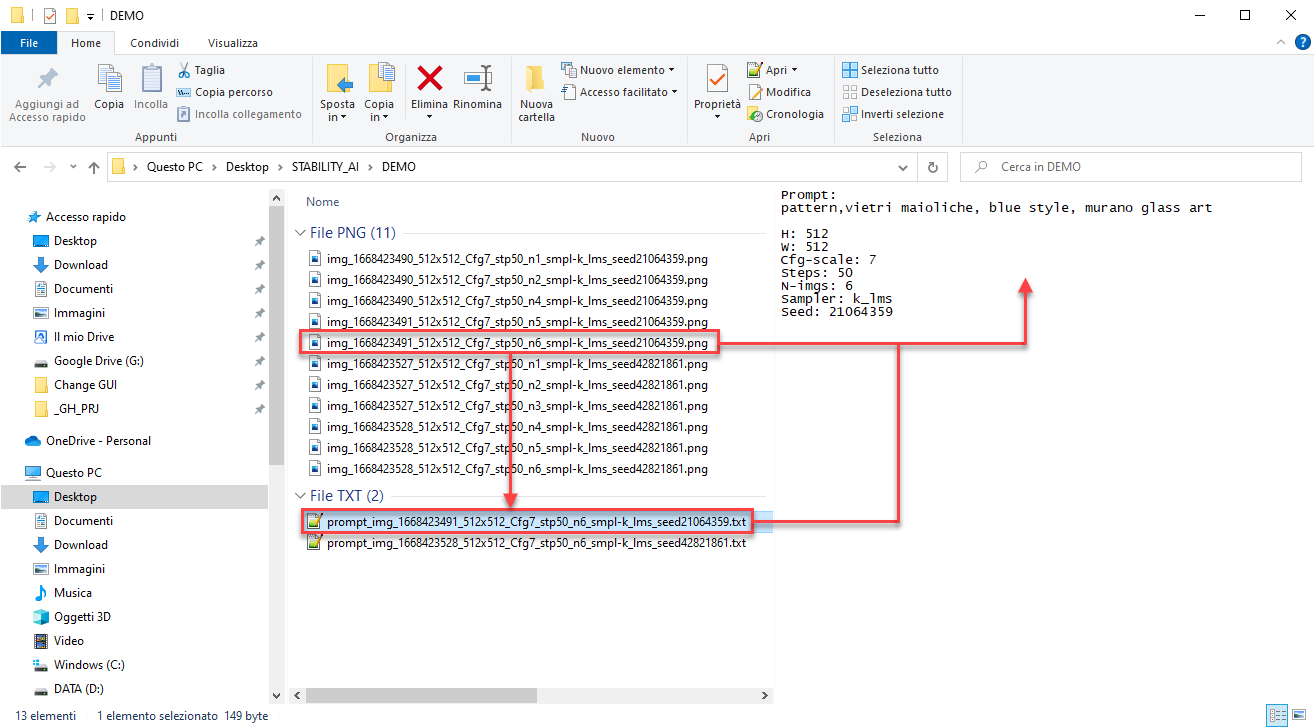

- The component generates for each prompt a text file (.txt) where you can read the prompt that has generated a specific image See image below);

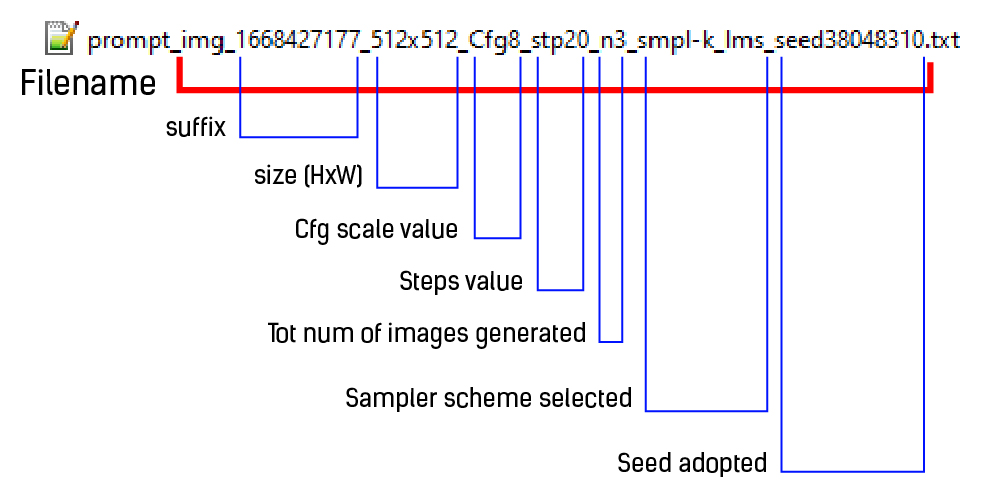

- The image FIlename and its prompt-text file are both codified:

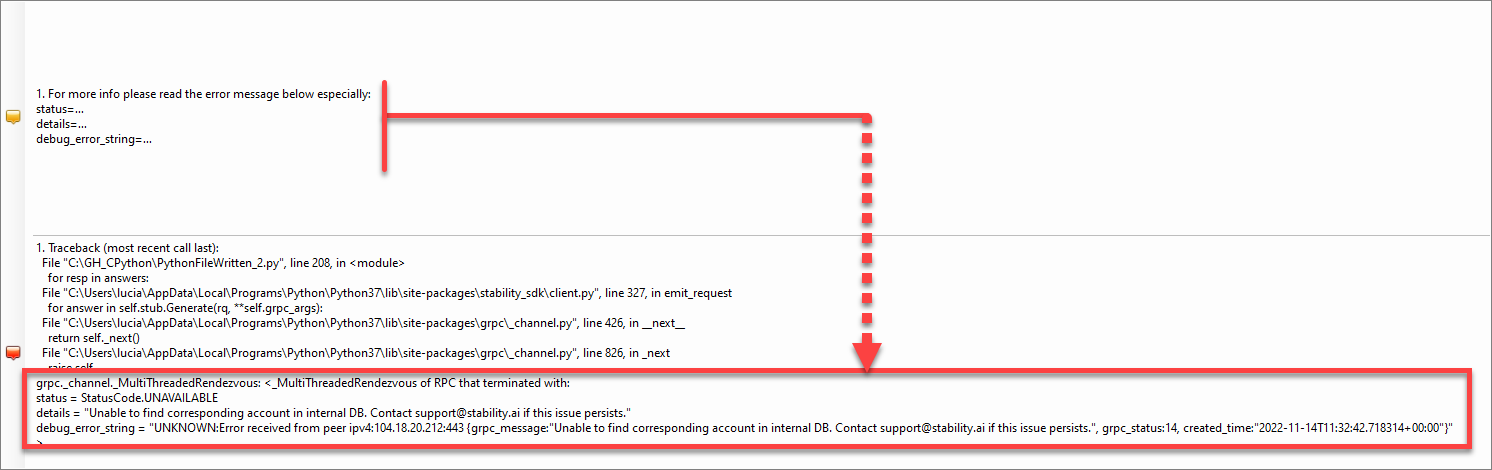

In case of server error such as input error, expired API Key credentials or insufficient credit try to have a look here:

In the case above the error refers to the revoked (cancelled) API key.

NOTE: I have noted that sometimes the engine generates a blurred image, in this case, you can change some parameters (first of all the Seed number).

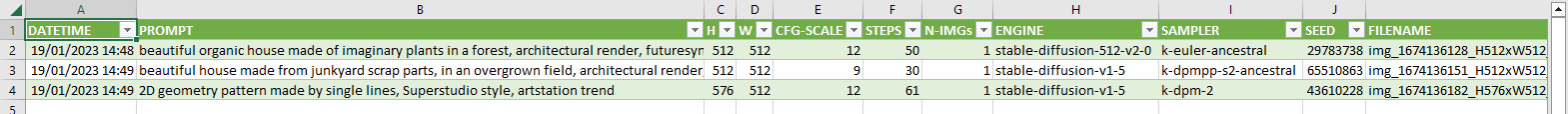

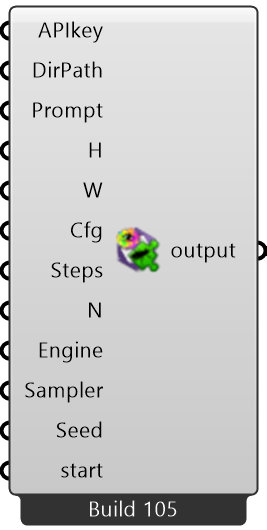

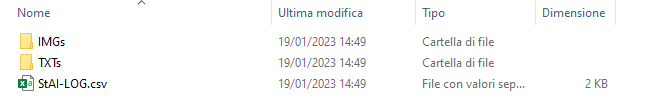

Since Build 105 output files are packed in an IMGs folder (all images), TXTs folder (all text prompts) and finally this build creates a CSV file as LOG output of all the AI requests and generation processes.

Here below the video DEMO

Since Build-102

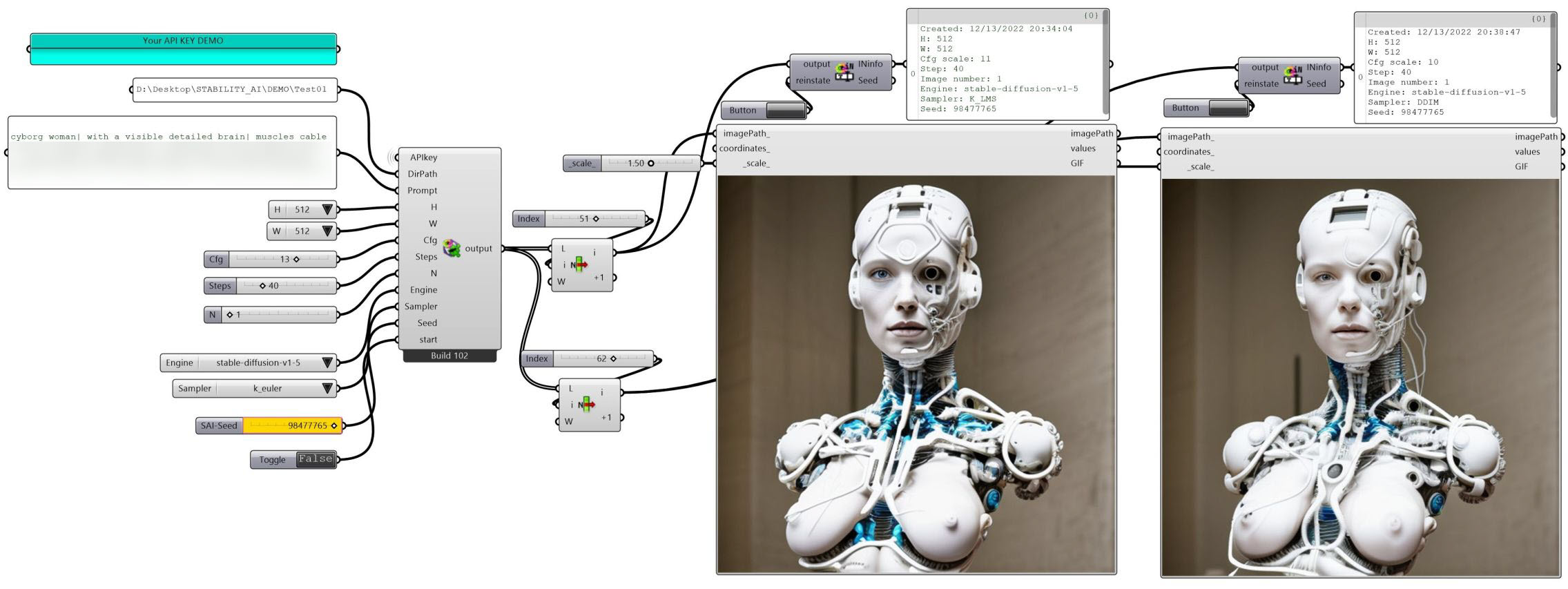

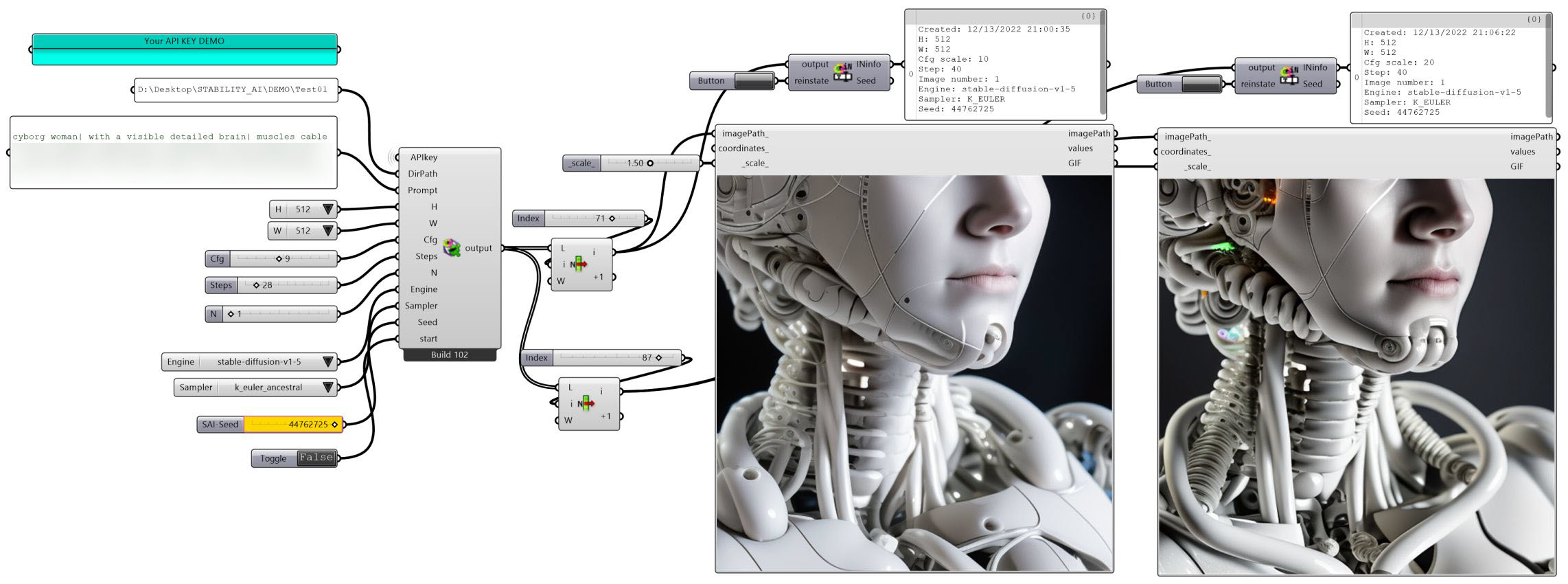

Stability AI platform has updated its Python library, this update allowed me to expand the LA_StabilityAI-GH component with two important features: Engines and Samplers.

Basically, the ability to explore your AI design production rests the same as before, but with more power control. Moreover, I decided to implement the possibility to reinstate the random seed of your image production thanks to the new SD-INinfo component. Practically, during your exploration stage, if you encounter a valuable seed (generated by default if no input is passed in Seed param) you can quickly reinstate it in a number slider called “SAI-Seed” and, consequently, read all the image input setting that this component collecting from all image filename generated by the build-102 and above. Since this version, you can retrieve the engine name both in the filename and the txt file generated during the creation stage.

Here below is the engines list available:

- stable-diffusion-v1

- stable-diffusion-v1-5

- stable-diffusion-512-v2-0

- stable-diffusion-768-v2-0

- stable-diffusion-512-v2-1

- stable-diffusion-768-v2-1

It is more likely that these two below are useful in the Clip guidance mode not included yet in this component

- stable-inpainting-v1-0

- stable-inpainting-512-v2-0

Sampler models list below:

- ddim

- ddpm

- k_dpm_2

- k_dpm_2_ancestral

- k_dpmpp_2m

- k_dpmpp_s2_ancestral

- k_euler

- k_euler_ancestral

- k_heun

- k_lms

More power control of the Stability Diffusion’s input parameters, and easily switch among them, let you stay focused on the details.

Below some highlights show the component in action, especially in my AI exploration entitled Cyberheuristics: the spark in the details – you will see some elaborations in the AI GenerativeArt gallery

Ex|CyH|2A – Cyberheuristics: the spark in the details

Ex|CyH|3A – Cyberheuristics: the spark in the details

You can see “the details exploration” of the experiment above in the second half of this video DEMO below

Download this component from HERE